Giving your AI agents a robust feedback mechanism is the single biggest lever you have to improve their reliability. It's the difference between a simple chatbot that gets stuck in a loop and an autonomous manager that can handle complex infrastructure and actually improve its own output over time.

As we’ve discussed in our comprehensive guide to AI Agent workflows, if you’ve been using tools like Gemini CLI or Claude Code, you’ve probably seen them "fly blind." They try a command, it fails, and they just keep hammering away at the same strategy. To fix this, you don't need a better prompt; you need a feedback loop.

The Sensory Input: Development Logs

During development, your agent’s primary "eyes and ears" are the console logs. If your agent is writing code but doesn't have access to the STDOUT (terminal output) of the tests it's running, it can't self-correct.

By ensuring your testing suite generates clear, structured logs, you allow the agent to observe exactly where a failure occurred, analyze the stack trace, and iterate on a fix without you having to intervene.

Always output logs to the console or a file that the agent can read. Structured logging (like JSON) makes it even easier for agents to parse errors accurately.

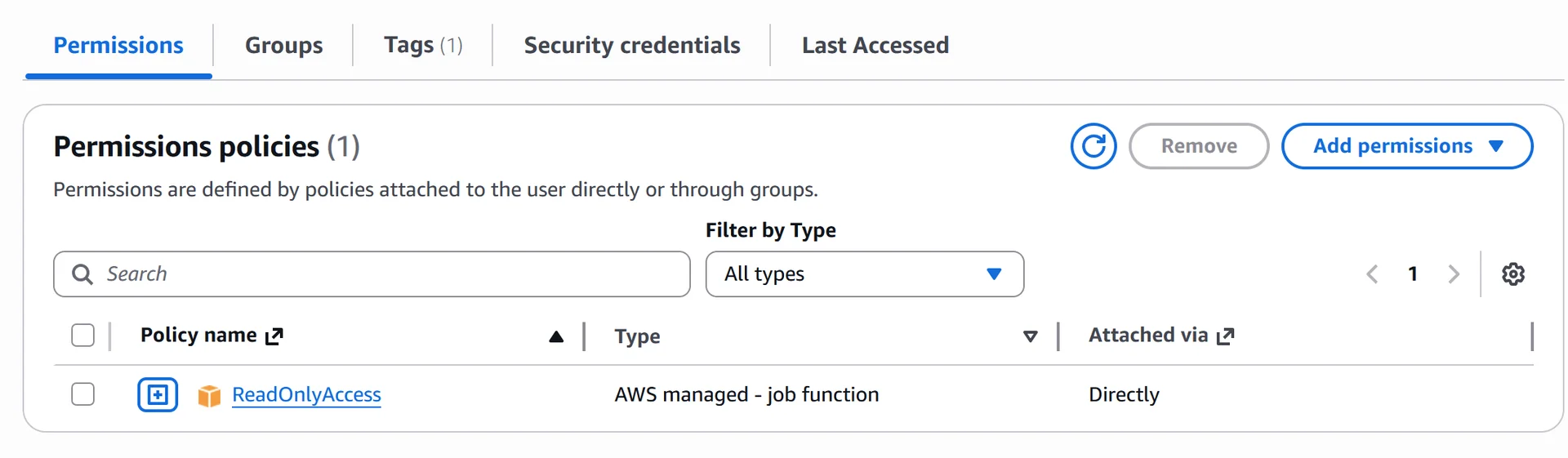

Production Intelligence: The Secure Bridge

The real power of feedback loops comes when you move beyond development and into the real world. But how do you give an AI access to production data without creating a security nightmare?

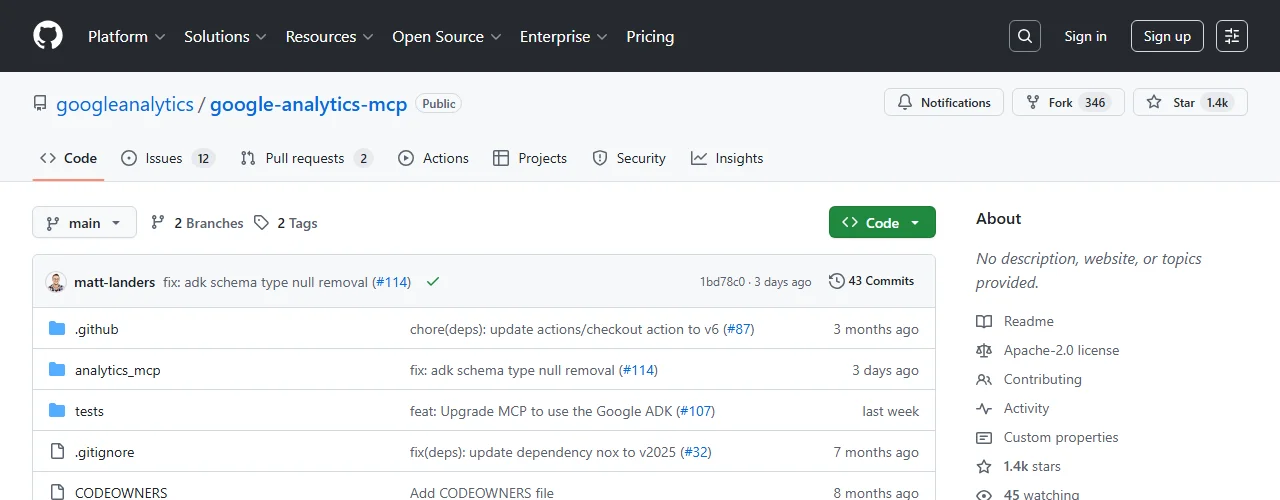

The answer is secure, read-only access. Today, we successfully configured the Google Analytics MCP server for our own CLI.

This setup is a game-changer. By giving the agent a read-only window into GA4, it identified several 404 errors and broken internal links on this very site that I hadn't noticed. By scheduling routine checks of these logs, the agent can proactively identify issues before your users even report them.

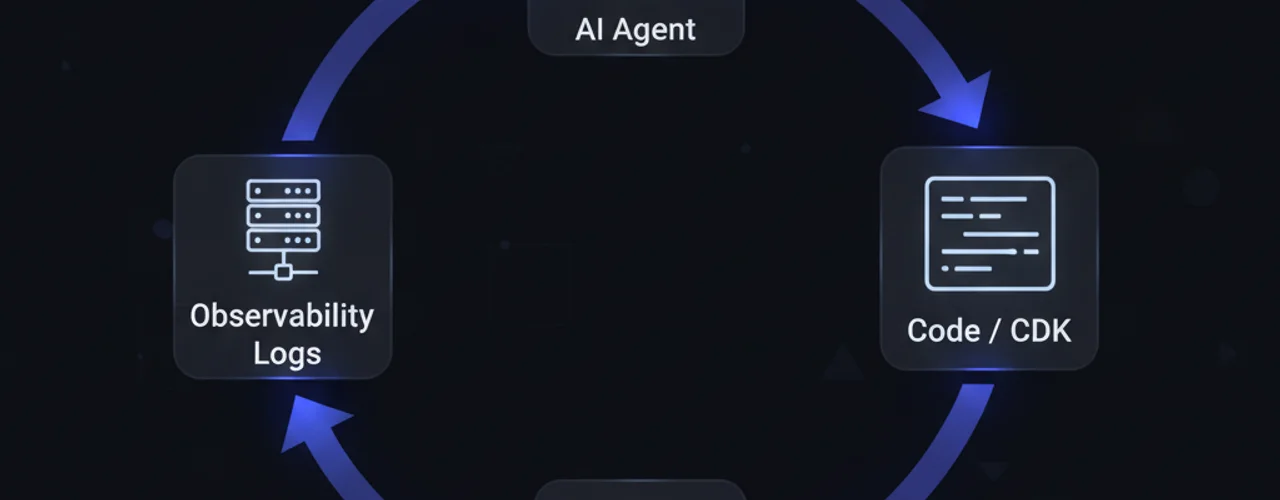

The Big Lever: Iterative Infrastructure

Perhaps the most massive lever I've discovered recently is giving agents the power to manage and improve AWS infrastructure iteratively. When an agent is tasked with deploying a new stack, it shouldn't just "push and pray."

Instead, we provide it with secure access to the infrastructure feedback:

- It reviews CloudFormation events during deployment.

- It analyzes CloudWatch logs to see if a new Lambda function is timing out.

- It iterates on the CDK code until the architecture is perfectly tuned.

Infrastructure Leverage

Agents managing infrastructure and improving it iteratively isn't just "automation"—it's leverage. It allows a single AI Hobbyist to manage global-scale architecture that would traditionally require a full DevOps team.

How to Set Up Your Own Loop

Ready to level up your agents? Follow this hierarchy to build your own autonomous feedback cycle:

- Development: Ensure all your scripts and tests output to a readable stream.

- Verification: Always ask the agent to "verify you have production logs" before it finalizes a task.

- Security: Use the Model Context Protocol (MCP) to provide high-level, scoped access to your data.

Level Up Your Insights

We just integrated the Google Analytics MCP to give our agent real-time feedback from techhacks.io. Check out the repo to see how you can do the same.

Final Verdict

The era of "one-off" AI prompts is ending. The future belongs to the iterative agent. By building these feedback loops, you aren't just saving time—you're building a system that learns from its environment and gets better with every deployment.